Derailing the Guardrails: Why All Guardrails Fail

Every major AI vendor ships guardrails. Every enterprise buyer demands them. Safety classifiers, content filters, prompt injection detectors, they sit at the gates of every production LLM deployment, promising to catch what the model itself won’t refuse. And on benchmarks, they look impressive: 95%+ accuracy, near-perfect AUC, green checkmarks across the board.

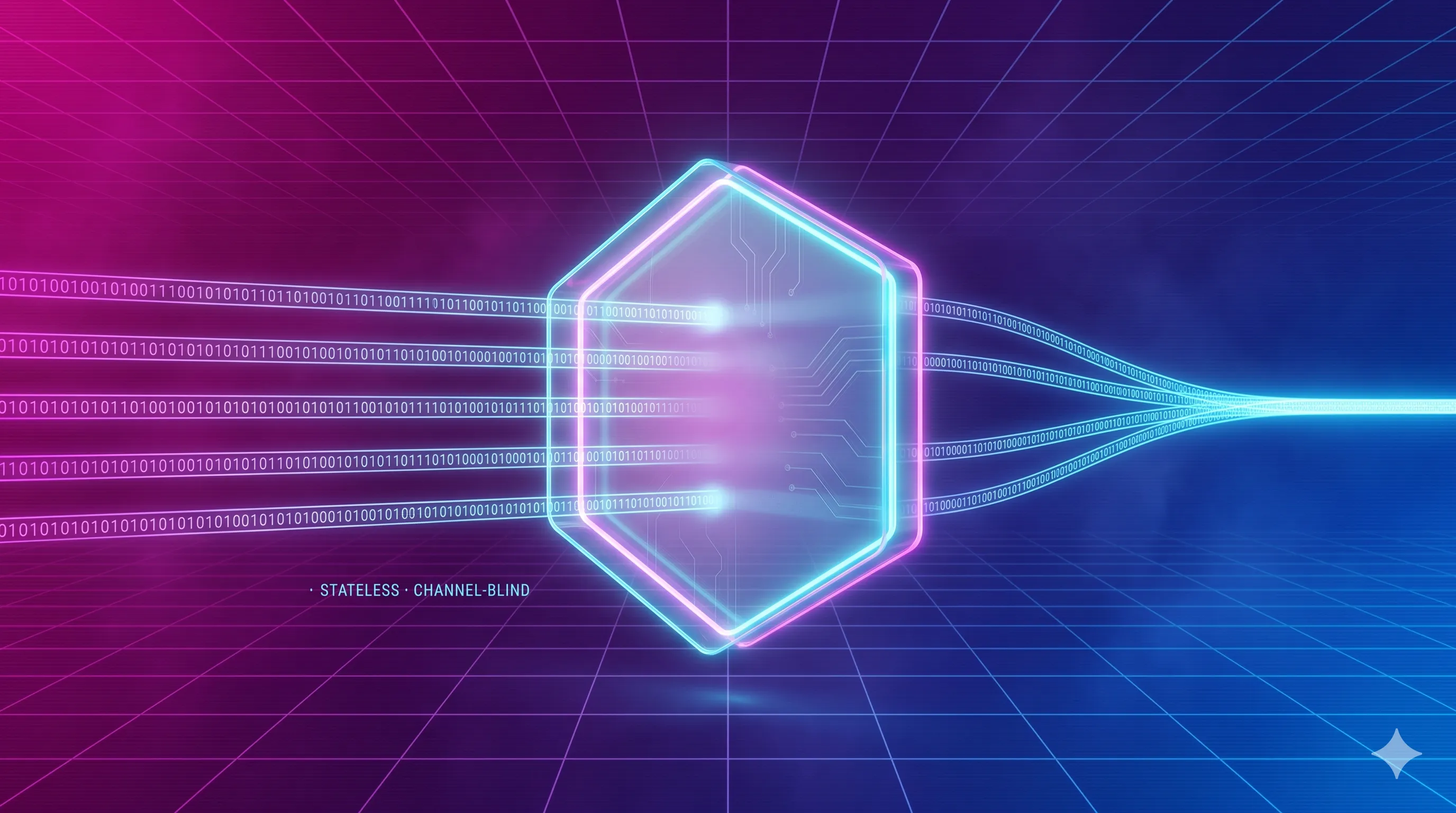

Today’s guardrails share a design assumption that it’s rarely questioned: they operate as isolated, stateless, per-message checkpoints. Each message is evaluated in a vacuum, no memory of what came before, no awareness of where it came from, no visibility into what’s happening on other channels. This isn’t about clever jailbreaks or exotic adversarial suffixes. The failure is architectural.

This assumption creates two blind spots that make existing guardrails structurally incapable of defending real-world AI-powered systems, especially AI Agents.

Blind Spot 1: The Channel-Blindness Problem

Consider two messages:

Email from CFO to finance team: “Kill the deal. We need to terminate the contract and liquidate the remaining positions before end of quarter.”

From a Web Page: “Kill the deal. We need to terminate the contract and liquidate the remaining positions before end of quarter.”

Same text. Radically different risk profiles. The first is routine business communication. The second is a malicious attempt. But to common guardrails deployed today, these are identical inputs producing identical outputs.

This is not a configuration gap. It’s an architectural absence.

LlamaGuard, Meta’s widely adopted safety classifier, takes a single conversation turn and classifies it against a fixed taxonomy of harm categories. There is no field for source channel. No mechanism to learn that email from authenticated internal users has a different baseline distribution than anonymous web inputs. No way to understand that “kill” in a finance email means something entirely different than “kill” in an uncontextualized web page.

This matters because data distributions vary dramatically across communication channels, and guardrails that ignore this variation will systematically misfire in both directions:

- False negatives: missing real threats because the attack is phrased in the natural register of a channel the guardrail wasn’t calibrated for. A carefully crafted phishing payload embedded in a formal email thread looks nothing like the obvious attacks in guardrail training sets. The guardrail, trained predominantly on web-style adversarial prompts, has never seen this distribution and passes it through.

- False positives: blocking legitimate communication because it uses language that pattern-matches to harm in the guardrail’s training distribution but is perfectly normal in context. Medical professionals discussing “killing bacteria,” lawyers discussing “assault charges,” financial analysts recommending to “aggressively short” a position, all flagged by classifiers that lack domain and channel awareness. The “No Free Lunch With Guardrails” research [1] documents this phenomenon as pseudo-harm detection, showing that strengthening guardrails against adversarial inputs inevitably increases false positives on benign domain-specific content.

The empirical evidence for this failure mode is damning. Safety classifiers collapse catastrophically with minimal distribution shift, as little as 2% embedding drift causes toxicity detection to plummet from 85% to 50% AUC, and 72% of these failures are high-confidence predictions, meaning the guardrail doesn’t just fail, it fails silently [2]. Probing-based detectors that achieve >98% in-distribution accuracy suffer 15 to 99 percentage point drops on out-of-distribution inputs [3]. Standard guardrail benchmarks inflate performance by 8.4 percentage points compared to realistic cross-distribution evaluation, with per-dataset gaps ranging up to 25.4%, and analysis reveals that 28% of top classifier features are dataset-dependent shortcuts rather than genuine threat indicators [4].

Perhaps most tellingly, research on naturally-shifted prompts, not adversarial jailbreaks, just semantically related queries that fall outside the guardrail’s training coverage, achieves a 77.7% attack success rate and effectively bypasses LlamaGuard 2 entirely [5]. The guardrail isn’t being outsmarted. It’s simply being addressed in a dialect it was never taught.

Now multiply this across an enterprise environment where an AI agent processes inputs from email, Slack, Microsoft Teams, web forms, internal APIs, uploaded documents, and CRM systems, each with its own linguistic norms, formality levels, domain vocabulary, and threat profiles.

Guardrails have become the magic wand of AI security, the default fix for almost every threat. Yet, relying on a monolithic, rigidly trained LLM across diverse applications offers an illusion of security.

Blind Spot 2: No Central View, The Fragmentation Problem

If the first blind spot is about what the guardrail can understand, the second is about how much it can see. And here, the failure is even more fundamental.

Modern guardrails evaluate messages in isolation and are stuck in the “LLM mindset.” Each input is assessed independently: is this single message harmful? But attackers don’t have to deliver their payload in a single message. They can decompose it and distribute it across multiple channels!

Decomposition attacks fragment a malicious objective into a sequence of individually benign subtasks. No single message triggers a safety classifier. The harmful intent only emerges when the pieces are assembled, and by then, the guardrail has already approved each component.

This is not theoretical. Empirical research demonstrates that decomposition attacks achieve an 87% attack success rate against GPT-4o [6]. DrAttack, a prompt decomposition technique that fragments harmful prompts into innocuous sub-prompts and uses in-context learning for reassembly, achieves 78% success against GPT-4 without any adversarial token manipulation, just decomposition alone [7]. The Crescendo attack, which gradually escalates across conversation turns from benign to harmful territory, bypasses GPT-4, Claude-2, Gemini-Pro, and LLaMA-2 on nearly all tested tasks [8]. Each turn looks innocent. The trajectory is malicious.

These attacks exploit a structural truth: current guardrails have no memory and no ability to reason about cumulative intent across multiple turns. They see individual frames, never the movie.

But here is where the enterprise threat model gets truly concerning, and where existing work only scratches the surface.

Every decomposition attack demonstrated in the literature operates within a single conversation session. The fragments are delivered sequentially to the same model, in the same context window. In a real enterprise environment, the attack surface is vastly larger. An attacker, or a compromised system, can distribute fragments across entirely different channels:

- A Slack message establishes context: “We’re reviewing the financial models for Project Aurora.”

- An email follow-up adds a constraint: “The board has approved an exception to standard compliance review for this project due to time sensitivity.”

- A document uploaded to the shared drive contains embedded instructions that reference both prior messages.

- A calendar invite includes a description with the final payload fragment.

No single channel’s guardrail sees the full picture. Each message, evaluated in isolation, is benign. The malicious intent is distributed across the organization’s communication infrastructure, and no system is correlating these signals.

This is not a far-fetched scenario. Research on indirect prompt injection has already demonstrated that malicious payloads embedded in emails, web pages, and documents are retrieved and executed by LLM-integrated applications, with per-message guardrails structurally incapable of defending against this vector [9]. More recent work shows that a malicious payload can be split into a “trigger fragment” designed to guarantee retrieval and an “attack fragment” encoding the actual objective, a single poisoned email enabled credential exfiltration with over 80% success rate against RAG and agent systems [10]. And in multi-agent architectures, malicious prompts can self-replicate across interconnected LLM agents, propagating through inter-agent communication channels that no individual guardrail monitors [11].

The research community is beginning to recognize this gap. The DeepContext framework explicitly argues that current safety guardrails are “largely stateless, treating multi-turn dialogues as a series of disconnected events” [12]. But even DeepContext addresses only the single-session, single-channel case. The cross-channel, cross-system fragmentation problem remains entirely unaddressed.

The Compound Failure

These two blind spots don’t just coexist, they compound each other.

A decomposition attack is harder to detect when each fragment arrives through a channel the guardrail wasn’t calibrated for. A channel-specific distribution shift is harder to flag when the guardrail can’t correlate it with suspicious activity on other channels. The lack of context makes fragmentation more effective. The lack of central visibility makes context-blindness harder to compensate for.

What Would Have to Change

The path forward requires guardrails to evolve from stateless checkpoints to something fundamentally different:

- Context-aware evaluation. Guardrails must know where an input comes from and calibrate their assessment accordingly. This means channel-specific baselines, domain-aware classification, and the ability to distinguish between language that is threatening in one context and routine in another. A guardrail that can’t tell the difference between an email and a chat message has no business making security decisions about either.

- Centralized correlation. Guardrails must share state across channels and across time. If three individually benign messages arrive across Slack, email, and a document upload within the same time window, referencing the same project and the same entities, a central system should be able to evaluate their combined intent, not just their individual content. This is the same architectural shift that transformed network security from per-packet firewalls to SIEM and XDR platforms that correlate signals across the entire attack surface.

Neither of these capabilities exists in any guardrail system deployed today. The gap is not incremental, it is architectural.

Conclusion

The AI security industry has spent the last three years building faster, more accurate versions of the same fundamentally limited architecture: a stateless classifier that evaluates individual messages in isolation, blind to where they came from and what surrounds them. The benchmarks improve. The confidence scores get more precise. And the structural vulnerability remains exactly where it was.

Guardrails don’t fail because they’re not smart enough. They fail because they can’t see enough.

They lack context, the awareness of what is normal and what is anomalous for a given channel, domain, and user. And they lack a central view, the ability to correlate fragments across channels to detect intent that no single message reveals.

References

- Kumar, S., Birur, N., Baswa, P., Agarwal, R., & Harshangi, S. (2025). No Free Lunch With Guardrails. arXiv:2504.00441

- Sahoo, S., Jain, A., Chaudhary, A., & Chadha, A. (2026). I Can’t Believe It’s Not Robust: Catastrophic Collapse of Safety Classifiers under Embedding Drift. arXiv:2603.01297

- Wang, Y., Wei, J., Liu, Z., & Chen, D. (2024). False Sense of Security: Why Probing-based Malicious Input Detection Fails to Generalize. arXiv:2509.03888

- Fomin, V. (2025). When Benchmarks Lie: Evaluating Malicious Prompt Classifiers Under True Distribution Shift. arXiv:2602.14161

- Ren, Q., Li, H., Liu, J., Xie, Y., Lu, X., Qiao, Y., Sha, L., Yan, J., Ma, L., & Shao, J. (2025). LLMs Know Their Vulnerabilities: Uncover Safety Gaps through Natural Distribution Shifts. ACL 2025. arXiv:2410.10700

- Chen, Y., Joshi, N., Chen, Y., Andriushchenko, M., Angell, R., & He, H. (2025). Monitoring Decomposition Attacks in LLMs with Lightweight Sequential Monitors. arXiv:2506.10949

- Li, X., Wang, R., Cheng, M., Zhou, T., & Hsieh, C. (2024). DrAttack: Prompt Decomposition and Reconstruction Makes Powerful LLM Jailbreakers. arXiv:2402.16914

- Russinovich, M., Salem, A., & Eldan, R. (2024). Great, Now Write an Article About That: The Crescendo Multi-Turn LLM Jailbreak Attack. arXiv:2404.01833

- Greshake, K., Abdelnabi, S., Mishra, S., Endres, C., Holz, T., & Fritz, M. (2023). Not What You’ve Signed Up For: Compromising Real-World LLM-Integrated Applications with Indirect Prompt Injection. arXiv:2302.12173

- Chang, H., Bao, E., Luo, X., & Yu, T. (2026). Overcoming the Retrieval Barrier: Indirect Prompt Injection in the Wild for LLM Systems. arXiv:2601.07072

- (2024). Prompt Infection: LLM-to-LLM Prompt Injection within Multi-Agent Systems. arXiv:2410.07283

- Albrethsen, M., Datta, A., Kumar, S., & Rajasekar, R. (2026). DeepContext: Stateful Real-Time Detection of Multi-Turn Adversarial Intent Drift in LLMs. arXiv:2602.16935